Introduction

The car industry is undergoing a major shift, fueled by advances in autonomous technology and the desire for safer, more efficient driving experiences. One of the most important components of this change is ADAS Sensor Fusion and Data Integration, which rely largely on sensor fusion and data integration. These technologies constitute the foundation of modern vehicle intelligence, allowing robots to observe and interpret the driving environment with human-like awareness—or better.

Combining information from multiple sensors deployed on the vehicle, such as cameras, radar, LiDAR, ultrasonic sensors, and onboard systems like GPS and IMU, to produce a comprehensive and accurate understanding of. This blog examines the significance, technology, difficulties, and potential of these interconnected systems in influencing mobility in the future.

Understanding ADAS Sensor Fusion and Data Integration: Essential Features and Sensor Roles

Vehicles have changed over the last 20 years from being mechanical systems to highly digital platforms with some degree of autonomy. Features of contemporary ADAS include:

- Adaptive Cruise Control (ACC): Automatically modifies the vehicle’s speed to maintain safe distances.

- Lane-Keeping Assistance (LKA): Assists the driver in maintaining the boundaries of their lane.

- Automatic Emergency Braking (AEB): Engages brakes when it detects an impending collision.

- The driver is informed of cars in neighbouring lanes using blind-spot monitoring, or BSM.

- Parking Assistance: Uses sensor feedback to help steer into confined parking spaces.

- Real-time environmental sensing is essential to all of these systems. But no single sensor can provide complete coverage in every situation. Sensor fusion becomes essential at that point.

ADAS Sensor Fusion and Data Integration: What is it?

The process of combining data from different kinds of sensors to create a more comprehensive, accurate, and dependable perceptual model of the surroundings is known as sensor fusion. By leveraging each sensor’s advantages and minimizing its disadvantages, it helps ADAS to get beyond the constraints of individual sensors.

For example:

- Although they provide sharp images, cameras are sensitive to illumination.

- Radar lacks clear pictures, yet it can detect objects in bad weather.

- LiDAR provides accurate 3D mapping, although it is not very effective when it is raining or snowing a lot.

- Although they work well at close range, ultrasonic sensors are not appropriate for high-speed detection.

- The ADAS can create a reliable and fault-tolerant model of the environment around the car by combining these inputs.

Types of Sensors and Their Functions in ADAS

Let’s examine the main ADAS sensors and see how they contribute to data fusion:

1. Cameras are mostly used for object recognition and classification.

Examples of Use:

- Lane recognition

- Recognition of traffic signs

- Identification of pedestrians and vehicles

Pros: Detailed item classification, color recognition, and high-resolution imaging.

Cons: Subject to bad weather, glare, and inadequate illumination.

2. Radar (Radio Ranging and Detection)

The primary goal is to detect objects using radio waves.

Examples of Use:

- Cruise control that adapts

- Avoiding collisions

- Measuring distance and speed

pros: Works effectively in rain, fog, and darkness.

Cons: Unable to accurately distinguish between different object shapes.

3. Light Detection and Ranging, or LiDAR

The main purpose is to map 3D space with laser beams.

Examples of Use:

- Identifying obstacles

- Modelling the environment

- Self-driving cars

Pros: Excellent spatial resolution and depth accuracy.

Cons: Costly; in fog, rain, or snow, performance degrades.

4. Sensors that use ultrasonic waves

To begin with, the primary goal is to locate distant objects using sound waves.

Examples of Use:

- Help with parking

- Blind spot identification

- Alerts for proximity in slow traffic

Pros: Cheap and short-range effective.

Cons: Ineffective over longer distances or at high speeds.

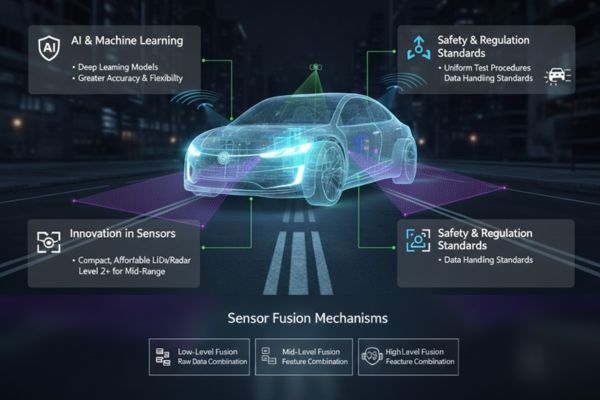

The Sensor Fusion Mechanisms

Depending on the architecture and application requirements, there are various system levels at which sensor fusion can be implemented. These include perception-level, decision-level, and data-level fusion, each offering distinct advantages and trade-offs.

1. Raw Data (Low-Level) Combination

combines sensor data in its raw form before pre-processing. provides excellent accuracy but requires a lot of processing power.

2. Mid-Level (Level of Features) Combination

Before fusion, data is processed into features (distances, edges, etc.). strikes a balance between resource usage and performance.

3. High-Level (Level of Decision Making) Combination

combines data or judgments that have already been interpreted. Easier to implement but may reduce system responsiveness.

In order to provide a cohesive picture of the environment, the fusion layer must process massive streams of data in real time. To achieve this, it must effectively remove inconsistencies, filter out noise, and resolve sensor conflicts.

4. Connectivity with Additional Vehicle Data Sources

Sensor fusion encompasses more than just physical sensors. Additionally, ADAS systems interface with:

GPS: Offers accurate location information.

The IMU (Inertial Measurement Unit) tracks the vehicle’s orientation and motion using accelerometers and gyroscopes.

Vehicle-to-Everything, or V2X, communication exchanges data between automobiles and infrastructure, such as traffic lights and intelligent road signs.

Moreover, this wider data integration enables better route planning, enhanced contextual awareness, and anticipatory responses—all of which are essential for achieving Level 3+ autonomy.

Benefits of ADAS Sensor Integration and Fusion

1. Increased Redundancy and Safety

Even if one sensor malfunctions or gives inaccurate data, ADAS maintains performance by utilizing several data points.

2. Better Sensation of the Environment

A larger range of items and situations, such as young toddlers next to a curb or rapidly approaching motorcycles in blind areas, can be more precisely detected by combined sensors.

3. Increased Dependability of the System

As a result of combining data from multiple sources, fusion reduces false positives and negatives in object identification. Consequently, this improvement enhances decision-making and facilitates more seamless interventions.

4. The Basis for Complete Independence

To make judgments without human intervention, Level 4 and 5 autonomous cars mostly rely on extensive, fused information.

Challenges in Implementing ADAS Sensor Fusion

Notwithstanding the benefits, incorporating sensor fusion systems comes with several financial and technological challenges:

1. Complexity of computation

High-speed processors and reliable software are needed for real-time fusion in order to handle data from up to thirty or more sensors. Algorithms have to strike a compromise between power consumption, accuracy, and latency.

2. Adjustment and Harmonization

To ensure reliable performance, sensors need to be time-synchronized and accurately calibrated. Otherwise, any misalignment can compromise system judgments by causing inaccurate data fusion.

3. Expensive development

As software development, computation platforms, and sensors collectively raise the overall cost of vehicles, OEMs must, in turn, capitalize on advancements in safety and consumer value to effectively justify and support these expenses.

4. Bandwidth and Data Storage

Since it takes a lot of resources to store and transmit gigabytes of sensor data every minute, effective data management techniques are therefore essential to ensure efficiency and scalability.

Prospects for ADAS Sensor Fusion and Data Integration

Developments in the following areas will influence ADAS sensor fusion in the future:

1. Machine learning and artificial intelligence

In dynamic situations, researchers are expected to increasingly utilize deep learning models to interpret fused data. This is because such models offer greater accuracy and flexibility, making them well-suited for complex and rapidly changing environments.

2. Computing at the Edge

Edge AI processors are becoming more popular in ADAS platforms because they enable real-time decision-making without requiring cloud connectivity.

3. Innovation in Sensors

With the ongoing development of more compact, power-efficient, and reasonably priced LiDAR and radar systems, mid-range vehicles are increasingly gaining access to Level 2+ technologies. Consequently, we expect this trend to accelerate the democratization of advanced driver assistance features.

4. Standards for Safety and Regulation

To ensure safety and interoperability, regulatory organizations are now establishing uniform test procedures and data handling standards for ADAS Sensor Fusion and Data Integration systems. As a result, compliance with these standards will significantly accelerate mass adoption.

Conclusion

ADAS Sensor Fusion and Data Integration represent a significant advancement in the development of intelligent, autonomous, and secure automobiles. Modern ADAS architectures provide improved situational awareness, redundancy, and perception by combining data from several sensor types and onboard devices. These features transform our understanding of mobility and set the stage for completely autonomous driving.

Sensor fusion will develop further, become more affordable, and be included into standard car platforms as the industry develops. With our state-of-the-art offerings in Vehicle Control Units (VCUs), CAN Displays, CAN Keypads, E/E Software Development Engineering Staffing Service at Dorleco are honored to help shape this future.